Voice AI has crossed a threshold of perceived reliability, capability and cost. It is now being rapidly rolled out to cover transcription, synthetic voice, conversational assistants and voice agents, all at near-human speed and capability.

The market has noticed. Globally, the voice recognition market hit $18.39 billion in 2025, projected to reach $61.71 billion by 2031.

The MENA region is very much part of this growth story. Investment is flowing in and voice AI is already at work across the region: cutting documentation time in hospitals, handling millions of contact center calls, and helping local KFC drive-throughs to go conversational.

In a 2025 survey of GCC organizations, AI adoption had risen from 62 to 84 percent, yet only 31 percent reported scaled deployment. In voice AI specifically, that gap is most often a language problem.

The glaringly obvious problem

The overwhelming majority of voice AI products are simply not built for this region. In a recent survey by Researchscape International, 92 percent of UAE respondents said they would prefer a smart or AI assistant specifically designed for the Middle East.

That makes sense, because most voice AI products share the same set of original sins: built as English models first, trained on synthetic data, tested in ideal lab conditions. This approach can’t keep up with the reality of a noisy Gulf contact center or a bustling Cairo hospital ward.

Most voice AI products are monolingual. And monolingual is no use for a language that is not monolithic.

One model to hold them both

Most of the market is still building this way. And while my team and I at Speechmatics have been building differently for 15 years, we were still falling short.

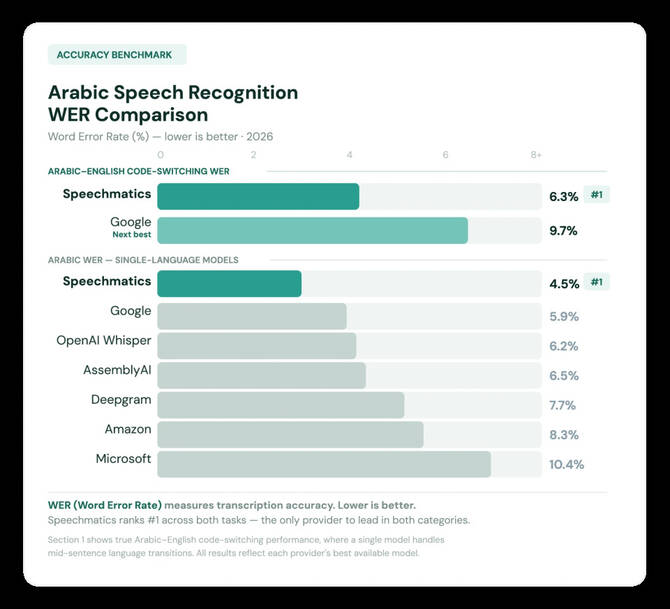

That is what pushed us to build the world‘s first medical Arabic-English bilingual model. One model, both languages, trained on real voices from across the region. On recent benchmarks, it is performing 35 percent above the next-best model.

Code-switching: the wall no one sees coming

Arabic is not a single language in any practical sense. Gulf, Egyptian, Levantine and Maghrebi dialects carry distinct vocabulary, phonology and rhythm. Different people will pronounce the same word differently.

I‘m Egyptian. My accent carries differently to a Gulf speaker’s, and both of us carry differently to someone from Tunis or Beirut. A model trained primarily on broadcast Modern Standard Arabic will struggle with many real conversations.

We made sure we had examples from different dialects and dialect-specific training data because enterprises need products and services that cross borders.

In most Arabic-speaking countries, people often switch between English and their dialect, particularly between formal and conversational speech. It is the default register for educated professionals, such as a doctor naming a drug in English or a finance officer mixing both in a single sentence.

The Gulf also has one of the world’s highest concentrations of expat communities, where Arabic and English are the working languages. If someone switches mid-sentence, running two models in parallel means one will think this is English and the other Arabic.

For optimum accuracy and speed, you need a single model that knows both languages and can differentiate between them as they are being spoken.

When errors stop being typos

In regulated sectors, a transcription error is a potential liability. Nowhere more so than in medical transcription, where a weak model that drops a drug name puts that error straight into the patient record, creating more work for already stretched doctors.

A dedicated domain model does not just reduce those errors. It allows us to achieve even higher accuracy in real settings. That is why we built a dedicated Arabic-English medical model trained on clinical vocabulary and real code-switching.

Our new medical model was tested in the real world by a retired Egyptian anaesthetist (also my father!).

Where the model runs matters as much as how it performs

Enterprises across the region are asking whose infrastructure their sensitive data runs on. Saudi Arabia’s Personal Data Protection Law came into full enforcement in September 2024, and the UAE’s Federal Data Protection Law has been in force since January 2022.

Both have concrete implications for where voice data is processed and stored, and cross-border processing triggers obligations many vendors cannot meet.

Beyond compliance, MENA enterprises are asking whose infrastructure their sensitive data runs on. The current geopolitical climate has made that question harder to ignore, and deployments here reflect it: on-prem and on-device, built around sovereignty from the outset.

All these things are tangible improvements that will really make a difference: in hospital systems, in government service fronts, and in the contact centers handling tens of millions of interactions a year across the region.

But the models have to work for the people using them. The gap is measurable, but it is closing.

- The writer, Yahia Abaza, is senior product manager at Speechmatics.